Why Spectro Cloud for enterprise AI

From sovereign clouds to AI factories, from GPU-as-a-Service to edge inference — ten reasons to make Spectro Cloud your AI infrastructure partner.

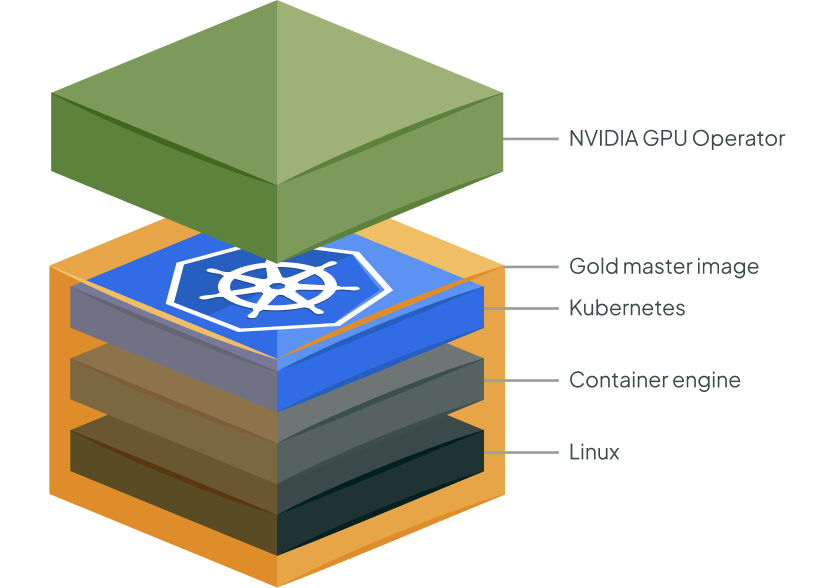

Full-stack ownership, from metal to model

Most platforms manage a single layer of the AI stack. Spectro Cloud provides native, end-to-end ownership of everything — bare-metal provisioning, OS, Kubernetes, GPU and DPU operators, networking, storage, AI platform services, and workloads.

Palette delivers declarative infrastructure management across public cloud, private data center, edge, bare metal, and air-gapped environments — with true unified VM and container management through VMO for legacy modernization.

PaletteAI extends this with curated AI workload stacks, GPU and model services, and AI-specific governance.

One platform replaces the fragile patchwork of point tools, giving platform teams complete control, while AI practitioners get the environments they need to move fast.

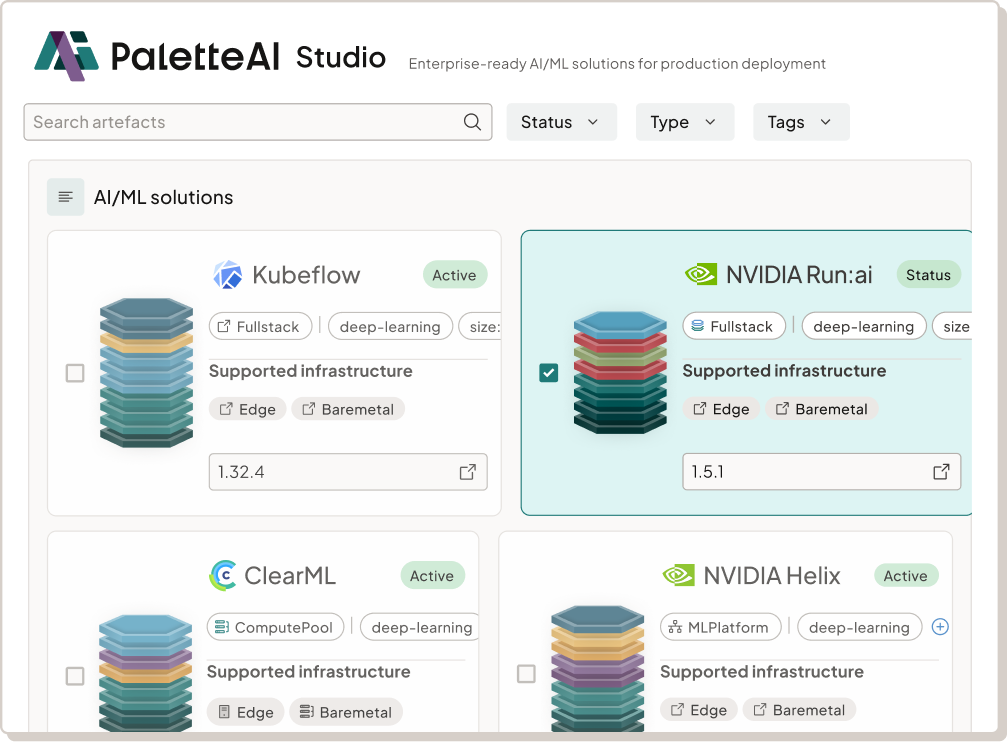

PaletteAI Studio: validated, executable AI blueprints

PaletteAI Studio is perhaps our single most important AI differentiator. It delivers curated, pre-integrated, validated AI stacks built with ecosystem partners including NVIDIA, Kubeflow, ClearML, and Run:ai… proven, production-ready systems that draw on the best of commercial components, open-source tooling, and hardware infrastructure.

The stacks you’ll find on the Studio are production-ready, continuously tested artifacts you deploy with a click — not a PDF runbook in sight. Where a typical AI factory takes six or more months to stand up, PaletteAI Studio compresses weeks of integration work into hours.

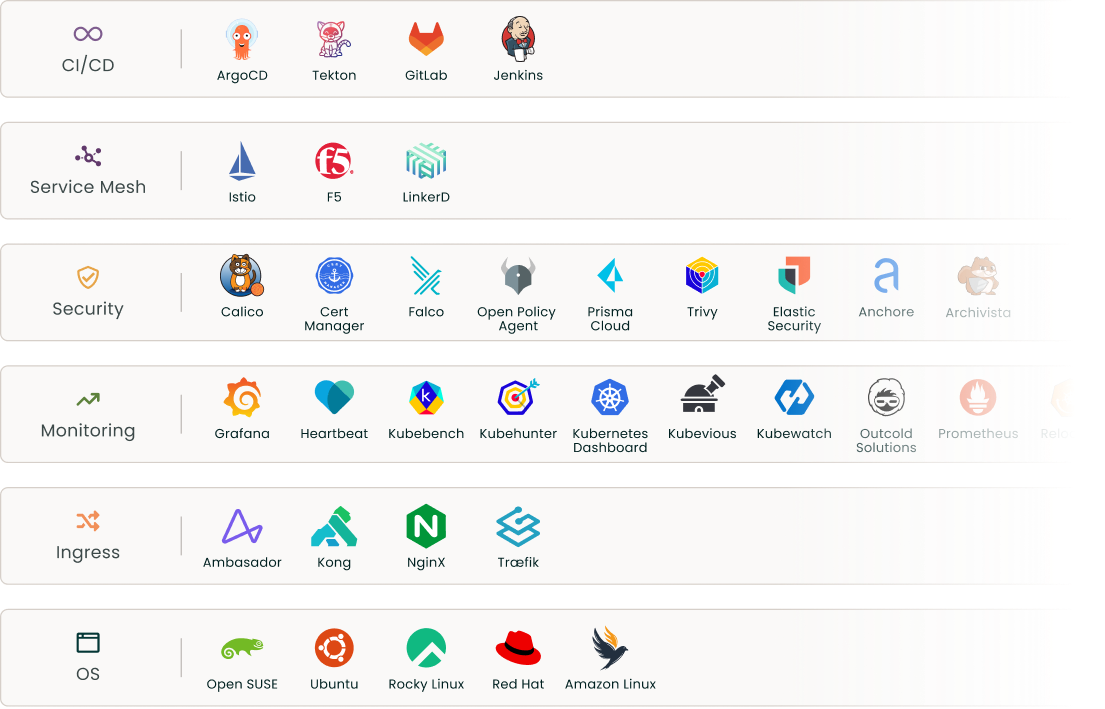

Your hardware, your stack, your choice

Spectro Cloud has always worked with any CNCF-compatible infrastructure: your choice of OS, Kubernetes distribution, storage, and networking. See our full integrations and environments page for details.

In the world of AI, we partner across the entire ecosystem — NVIDIA, AMD, Dell, HPE, Supermicro, Cisco, and dozens more — from silicon to AI application layer.

Deep integrations extend all the way to the AI models themselves, including NVIDIA NIMs and Hugging Face integration. You get an enterprise-grade experience with polished UI, prebuilt templates, and 24×7 support, alongside the freedom to build AI environments your way.

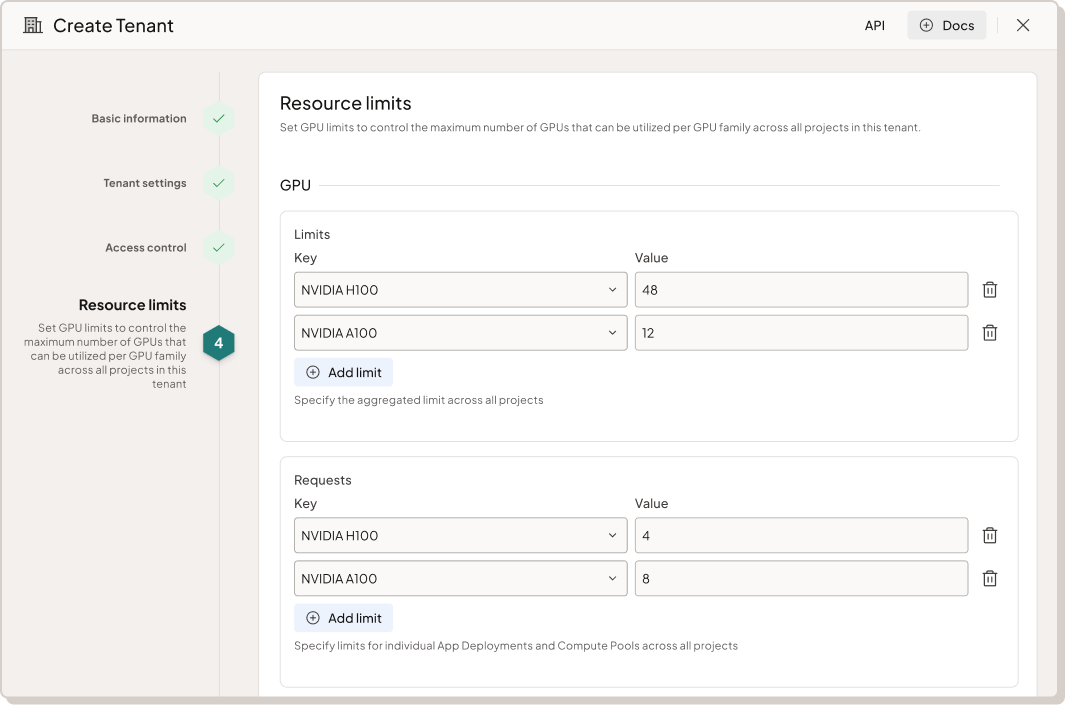

Enterprise-grade multitenancy for AI-as-a-Service

PaletteAI lets you deliver GPU-as-a-Service and Model-as-a-Service to multiple teams, business units, or external customers from a shared platform — with strong isolation, role-based access, quota management, and per-tenant cost visibility.

Infrastructure guardrails enforce GPU quotas at tenant and project levels to control costs. Sovereign clouds and neoclouds can manage the infrastructure and control platform access, while end customers build their own use cases and stacks.

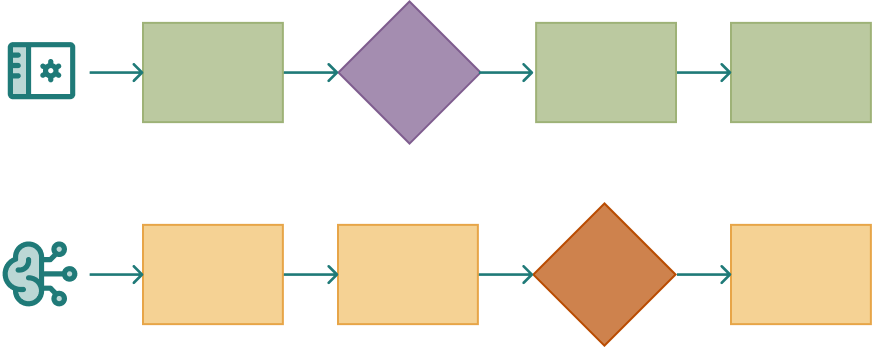

Separate workflows for platform and AI teams

The tension between platform teams focused on governance and AI practitioners demanding speed is one of the biggest blockers to AI ROI.

PaletteAI resolves this with distinct yet aligned workflows: platform teams create secure, reusable templates and set policy; AI teams deploy through self-service interfaces. Data scientists can stand up new AI environments with a click and get to work immediately. The result is fewer tickets, no shadow AI, and faster time to value for everyone.

GPU efficiency that pays for itself

With the majority of enterprise GPUs underutilized, waste is the silent killer of AI ROI.

PaletteAI delivers optimized resource allocation with native support for the NVIDIA GPU Operator, vGPU, Multi-Instance GPU (MIG), and GPU time-slicing — so expensive hardware is shared efficiently across workloads. Applications run directly on hardware with zero hypervisor overhead, which is essential for AI, HPC, and latency-sensitive workloads. Intelligent node allocation with autoscaling optimizes compute utilization automatically. Platform teams gain full visibility into what is being used, by whom, and at what cost — including energy consumption tracking across every environment.

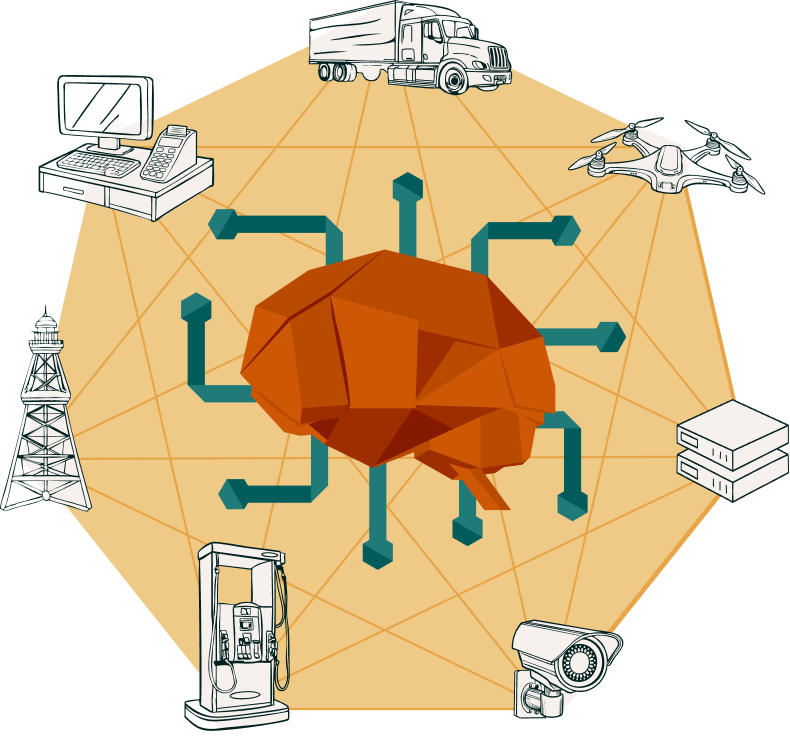

Edge AI at massive scale

Palette’s patented decentralized architecture is purpose-built for edge environments: each cluster operates autonomously even when disconnected, with local policy enforcement and self-healing. Palette pushes inference workloads to resource-constrained devices and manages consistent AI environments across thousands of sites — from factories and retail stores to field hospitals and forward-deployed systems in tactical environments.

Operational details matter at the edge. Palette provides low-touch, plug-and-play setup with immutable updates, over-the-air zero-downtime rolling upgrades for both Kubernetes and OS, and native support for NVIDIA Jetson for edge AI use cases.

Our edge capabilities have been recognized by GigaOm, CRN’s Edge Computing 100, edge analysts STL, and the juggernauts at Gartner.

.png)

Sovereign and regulated AI — FIPS, air-gapped, mission-ready

Our Palette VerteX and PaletteAI VerteX editions are built for environments where compliance is mandatory. FIPS 140-3 validated cryptography, supply chain security, runtime threat detection, and policy-driven governance come standard.

Our Secure AI Native Architecture (SAINA) integrates with NVIDIA BlueField DPUs for end-to-end zero-trust security. Deployable in air-gapped and sovereign environments — backed by 24×7 support and SLAs.

End-to-end solution support — one hand to shake

AI stacks span multiple vendors, and when something breaks, finger-pointing between them can cost you days. Spectro Cloud provides end-to-end solution support with collaborative triage across vendors, owning the customer problem until resolution. This is especially critical as NVIDIA increasingly pushes L1/L2 support responsibilities to partners. With Spectro Cloud, you get a single point of accountability across the entire stack — infrastructure, Kubernetes, GPU operators, and AI workloads.

.png)

Deploy your way, with simple, predictable pricing

PaletteAI is easy to self-host inside your own facility, and pricing for PaletteAI is a flat fee per GPU managed: simple to model, easy to scale, and predictable as your AI initiatives grow.

And one more thing: maturity matters

Spectro Cloud brings years of production-hardened infrastructure management to AI. We’re ISO 27001 certified. SOC 2 Type II audited. FIPS validated. We offer 24×7 global support. We already power mission-critical AI deployments for some of the world’s largest enterprises and public sector organizations — from hyperscale data centers to tactical edge locations.

Palette and PaletteAI are part of the NVIDIA Enterprise AI Factory validated design. Our community involvement includes membership of the Agentic AI Foundation (AAIF), contributions to open-source projects, and active participation in industry working groups. See our awards and certifications for the full picture.

.png)

Put us to the test

We’ve made our claims on these pages. We’re ready to back every one of them — in your environment, with your teams, against your requirements.

Whether you’re evaluating platforms for a sovereign AI program, building your first AI factory, scaling inference to the edge, or delivering GPU-as-a-Service to your organization, we welcome the chance to show you what Spectro Cloud can do.

.svg)